Evangelos Kazakos (Vangelis)

PhD in Computer Science, currently a postdoctoral researcher at CIIRC @ CTU

Welcome! I’m Vangelis, a postdoctoral researcher at CIIRC, CTU Prague. My research focuses on multimodal learning and spatio-temporal understanding in images and videos. I’m part of the IMPACT group, where I work closely with the robotics team to bridge vision and robotics by developing methods useful for training VLAs and world models. I’ve also been a core contributor to several community datasets: EPIC-KITCHENS-55 and EPIC-KITCHENS-100, EPIC-SOUNDS, and more recently HowToGround1M and iGround.

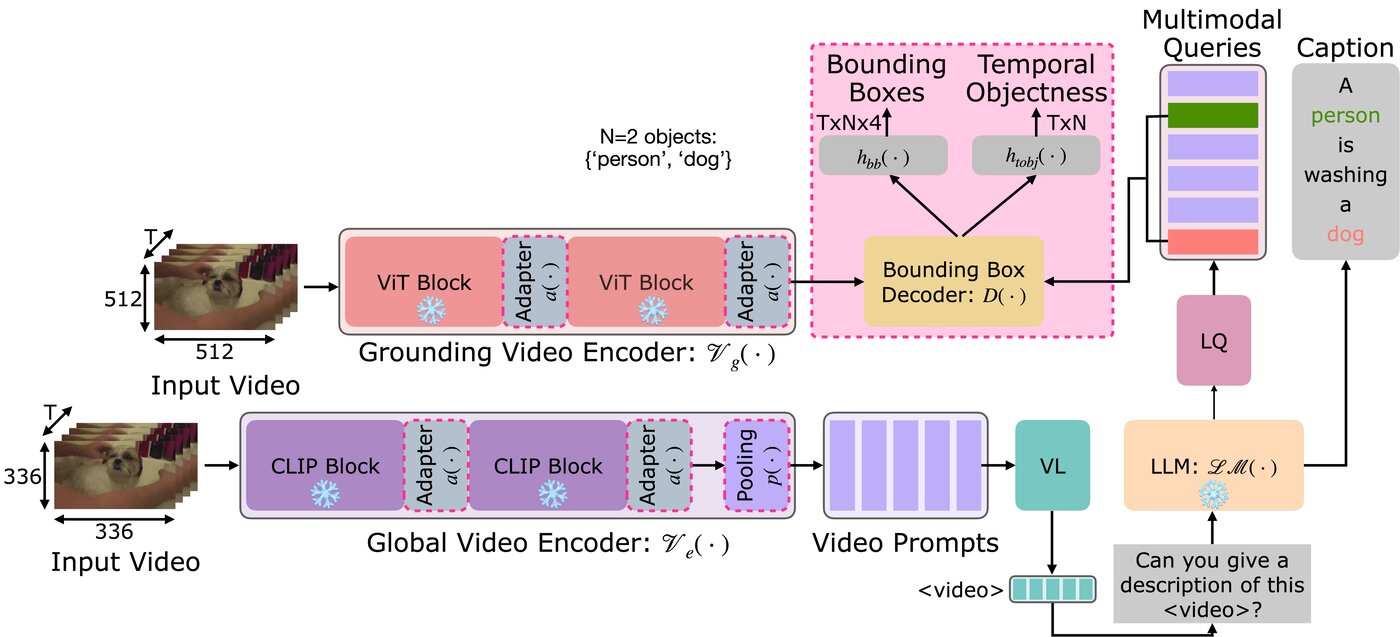

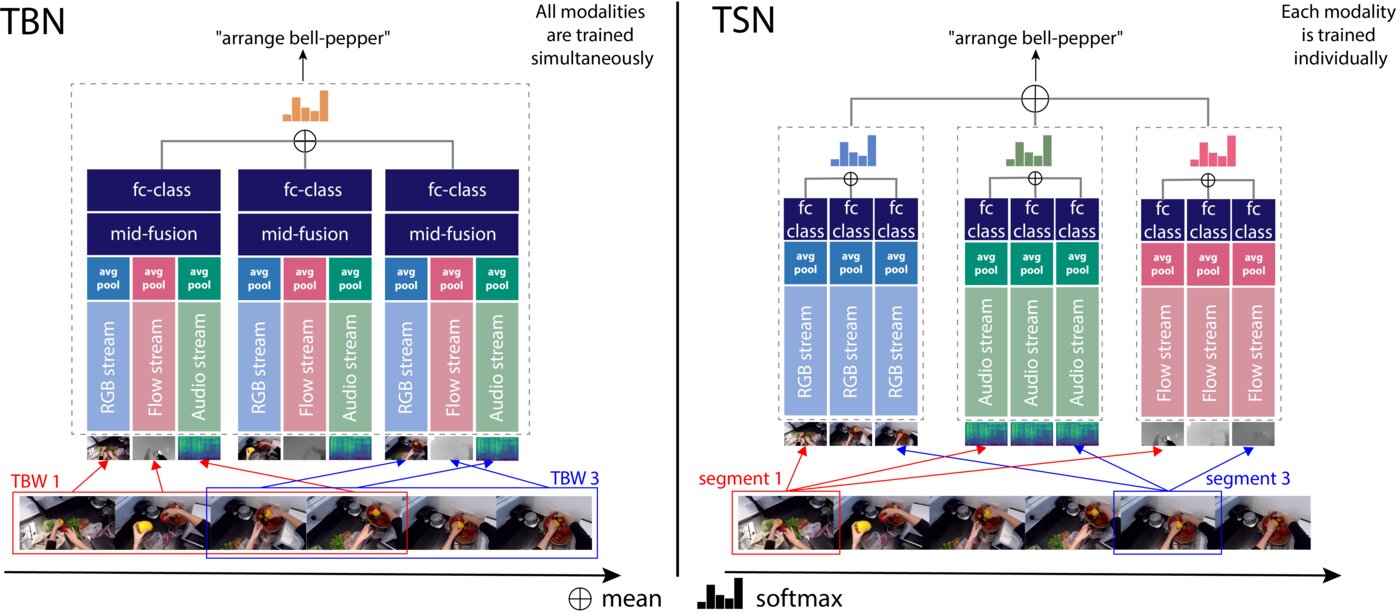

Previously, I did my PhD at the University of Bristol, where my thesis on Audio-Visual Egocentric Action Recognition highlighted the essential role of audio in understanding actions captured through wearable sensors.

news

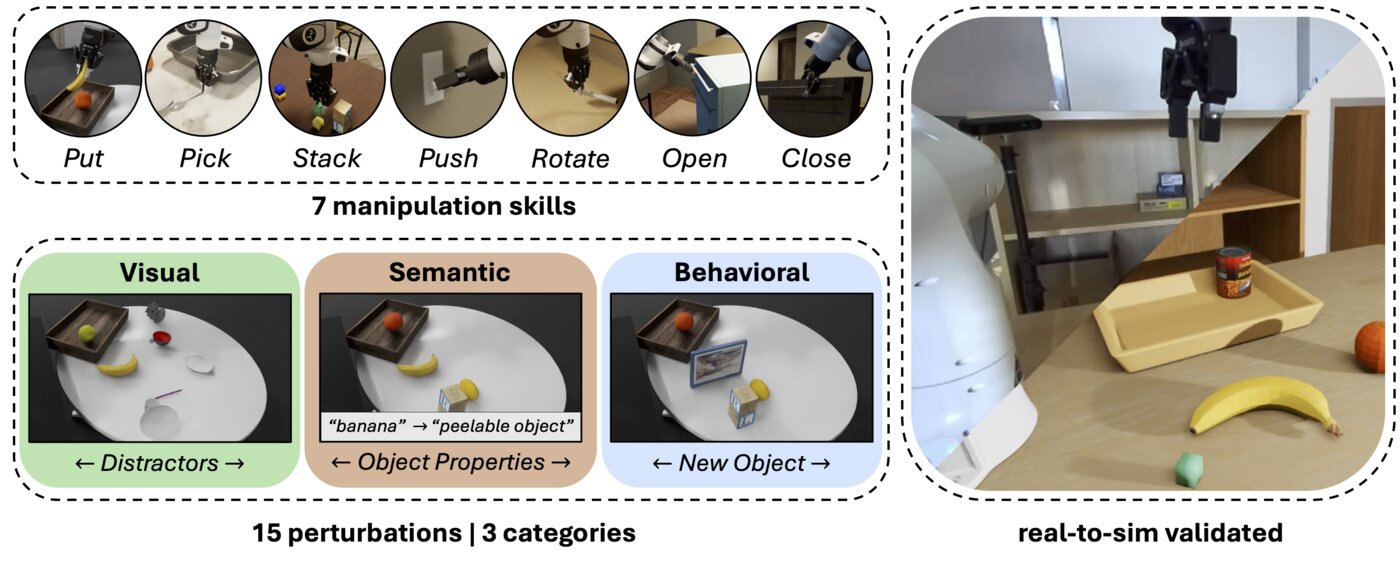

Our paper, REALM, a real-to-sim validated benchmark for robot manipulation, is on arXiv. [arXiv] [Project webpage] [Code]

I’m co-organising the first AI for Peace workshop @ ICLR 2026. [Workshop webpage]

We’ve released grove-transformers, a lightweight, inference-only interface for our GROVE model, implemented with 🤗 Transformers. [🤗 link] [GitHub link]

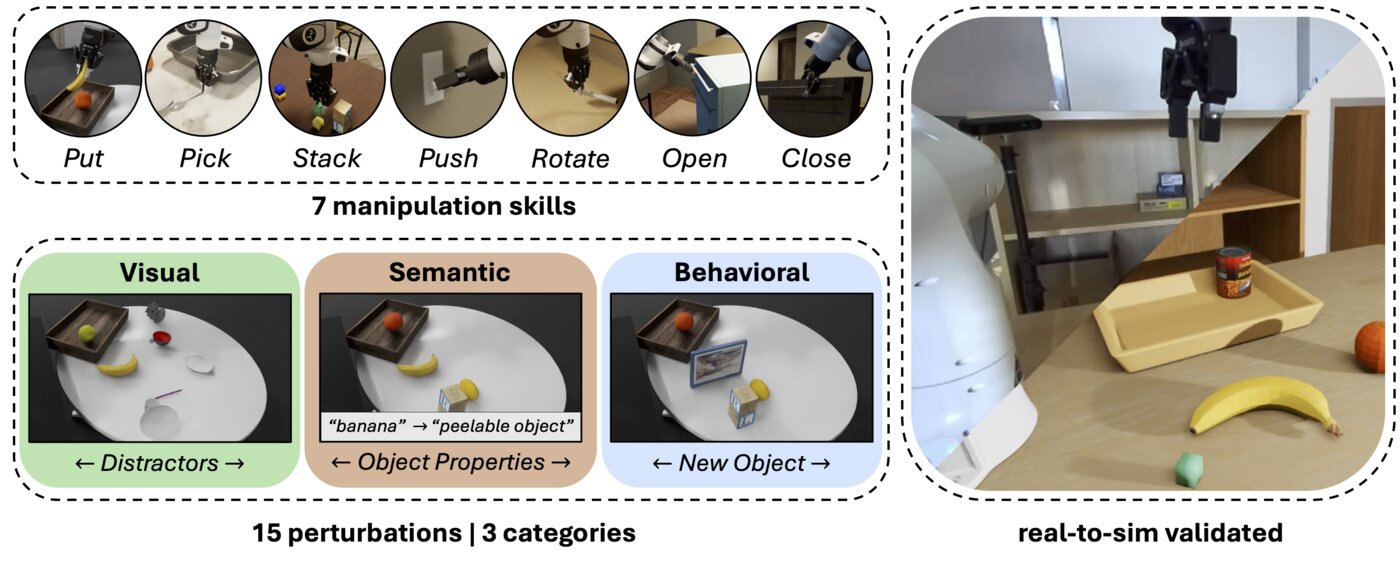

We’ve released datasets (HowToGround1M and iGround), checkpoints and code for our work “Large-scale Pre-training for Grounded Video Caption Generation”. [Project webpage] [Code, checkpoints, data]

I’ve been invited to present our work “Large-scale Pre-training for Grounded Video Caption Generation” at the EuroHPC User Days event on the Artificial Intelligence for Science parallel session, scheduled for the 1 October 2025 at 11:30. [Event link]

Our work “Large-scale Pre-training for Grounded Video Caption Generation” has been accepted to ICCV 2025!

I was nominated as Outstanding Reviewer for CVPR 2025. [link]

I presented our work “Large-scale Pre-training for Grounded Video Caption Generation” at the weekly webinar of TwelveLabs. [YouTube link]

Our paper “Large-scale Pre-training for Grounded Video Caption Generation” is now on arXiv. [arXiv] [Project webpage] [Code] (available soon, stay tuned!)

I received the 2024 IJCV Outstanding Reviewer Award. Announcement

I started a new role as a Postdoctoral Researcher at Czech Institute of Informatics, Robotics and Cybernetics (CIIRC) at CTU in Prague. My research will focus on multimodal understanding using video and language

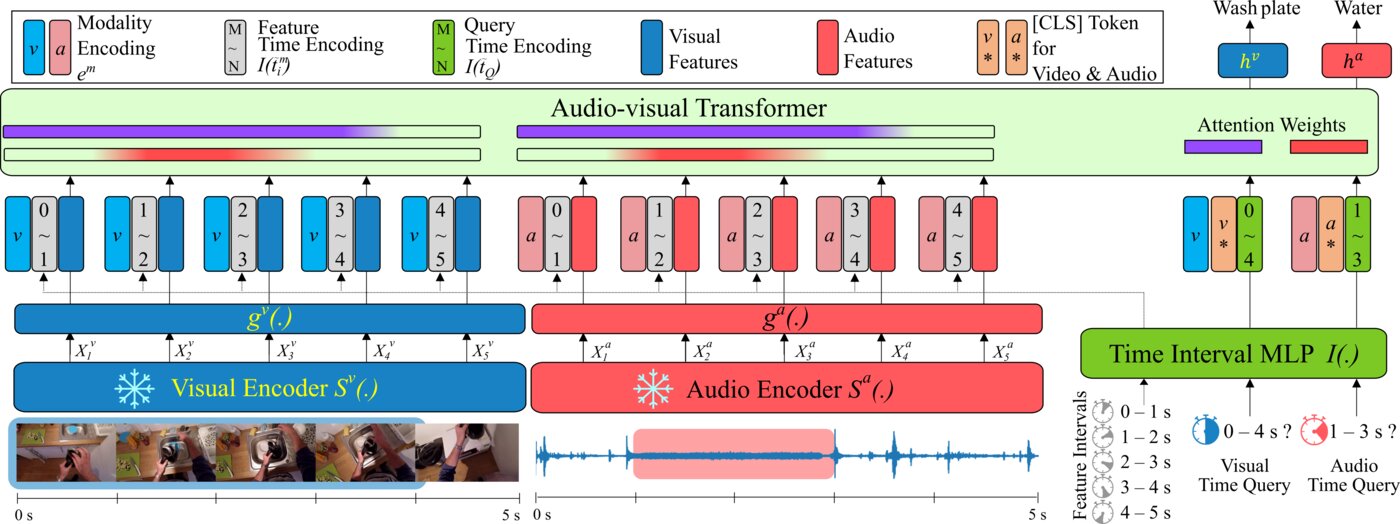

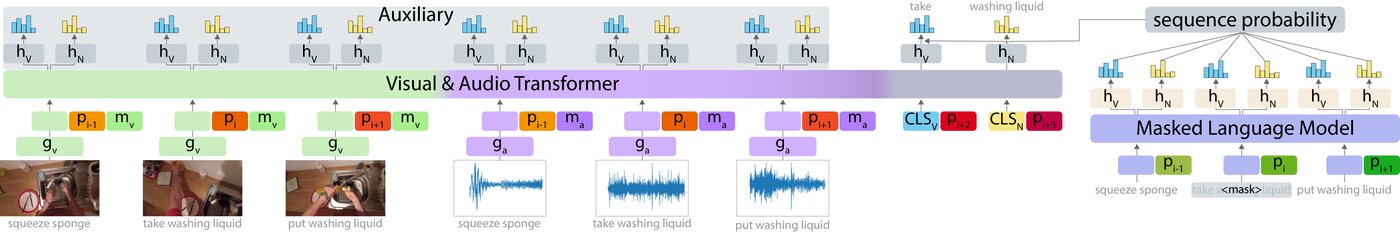

Our paper with title “TIM: A Time Interval Machine for Audio-Visual Action Recognition” has been accepted at CVPR 2024 [paper] [project page]

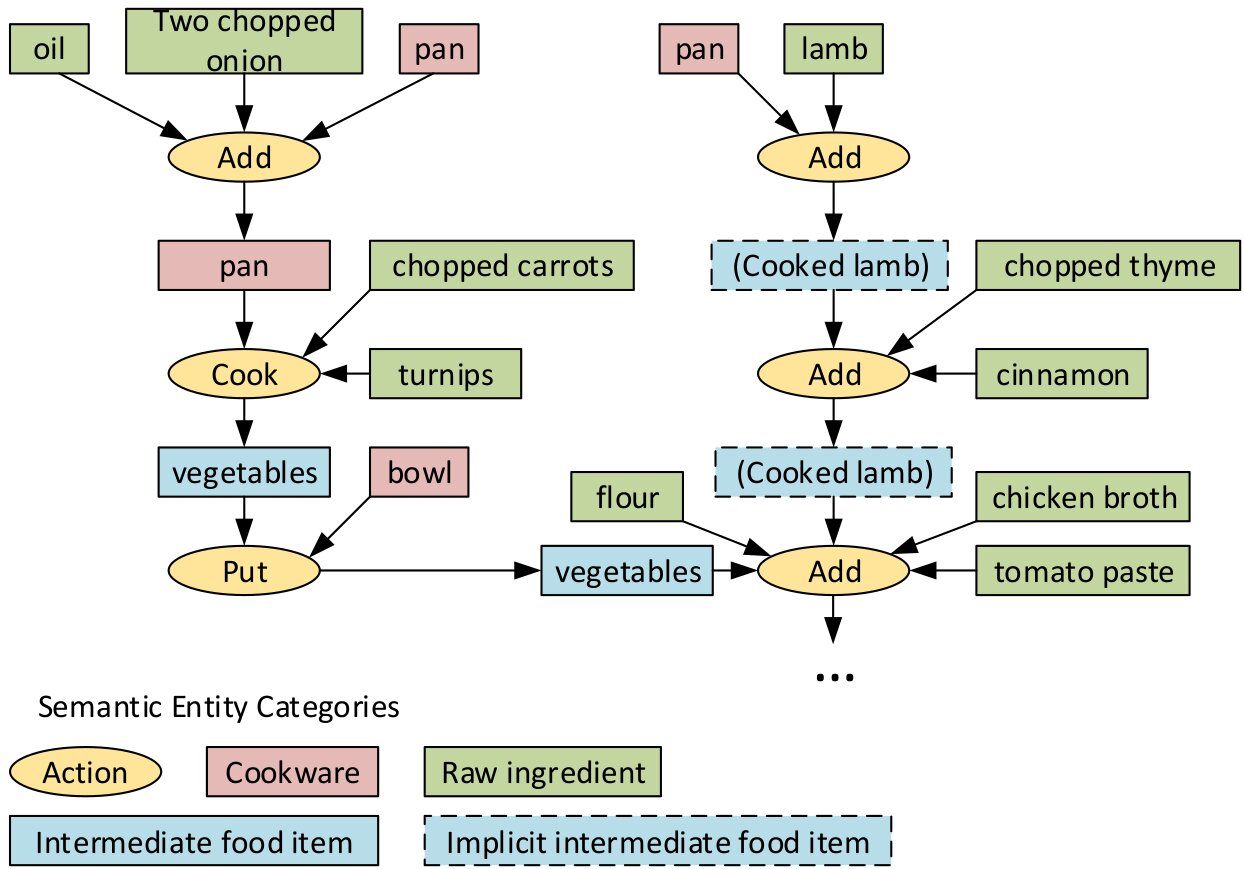

Our paper with title “Graph Guided Question Answer Generation for Procedural Question-Answering” has been accepted at EACL 2024 [paper]

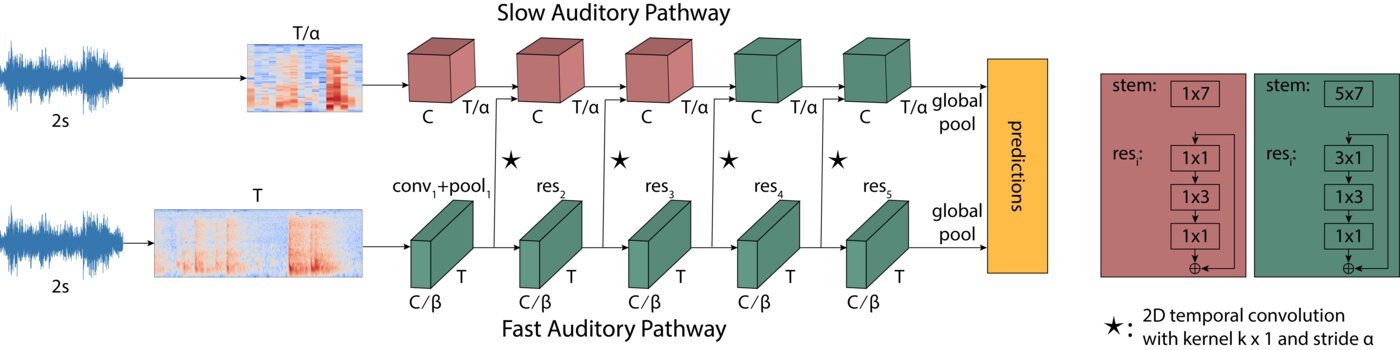

Our paper with title “Epic-sounds: A large-scale dataset of actions that sound” has been accepted at ICASSP 2023 [paper] [project page]

I joined Samsung AI Center in Cambridge as a Research Scientist

I successfully defended my PhD dissertation with title “Audio-Visual Egocentric Action Recognition” [link]

publications

datasets

EPIC-KITCHENS-100

IJCV 2022Rescaling Egocentric Vision: Collection, Pipeline and Challenges for EPIC-KITCHENS-100

EPIC-SOUNDS

TPAMI 2025EPIC-SOUNDS: A Large-Scale Dataset of Actions That Sound

HowToGround1M

ICCV 2025Large-scale Pre-training for Grounded Video Caption Generation

iGround

ICCV 2025Large-scale Pre-training for Grounded Video Caption Generation